Specifying Cabinets for AI: 6 Key Considerations for Scalable, High-Density

AI and Data Center Cabinet Requirements

As AI adoption accelerates, the demands on data centers are evolving at an unprecedented pace. These power-hungry workloads require significantly more power, leading to more servers that generate a higher heat output. The cabinets housing this infrastructure must be capable of handling the increased weight, higher equipment densities, and more intensified cooling requirements.

Data center engineers are currently facing a significant challenge: selecting cabinets that can meet the demands of today’s high-performance computing (HPC) applications and machine learning models, while also providing the flexibility to support the next generation. This is no easy task.

So, what are the key factors data center engineers should consider when specifying the right cabinet for AI workloads?

Load Capacity

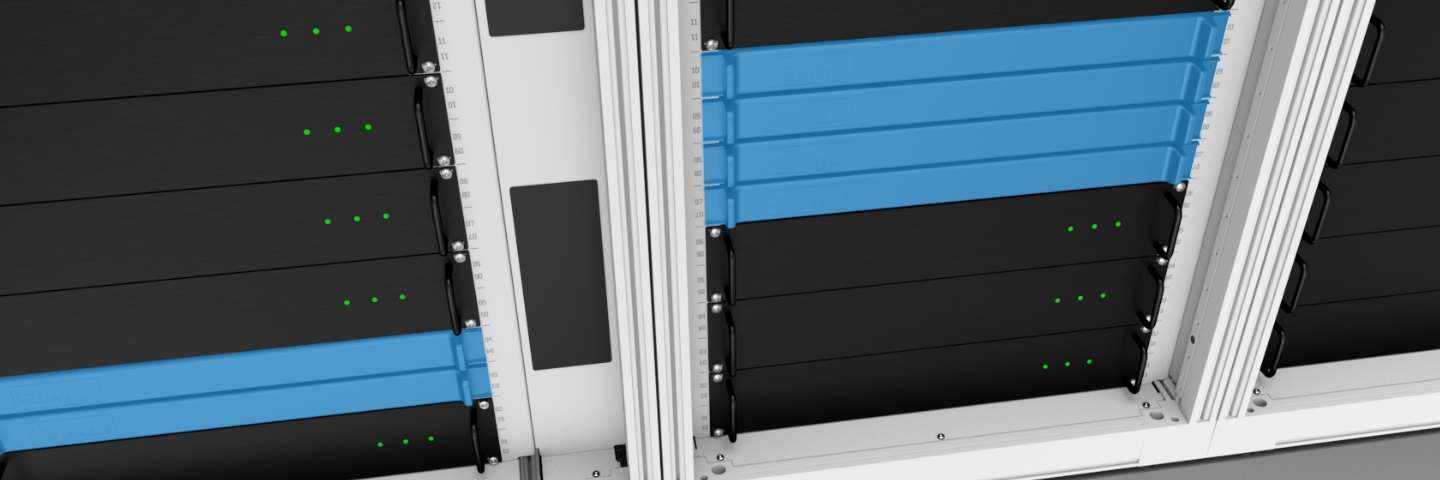

Traditional data center cabinets are typically designed for 1U and 2U servers, with a weight typically around 8kg (17 pounds) per rack unit (RU). However, AI-optimized graphics or tensor processing units are significantly heavier. For instance, an NVIDIA H100-based AI server can weigh up to 16.5 kg (36 pounds) per RU, meaning a fully loaded AI rack may need to support 5,000 pounds of static weight.

It’s essential to select cabinets rated to support the increased weight of your AI servers. Look for cabinets that can support capacities of up to 5,000 pounds of static capacity to ensure the cabinet can handle any future expansion.

Rack Dimensions – Size Matters

Space is the holy grail in today's high-density environments, where maximizing every inch of cabinet space is crucial. To accommodate AI infrastructure needs, traditional cabinets, which are typically between 42U and 47U in height, are now increasing in height to between 47U and 52U. The widths are also increasing, from 600 mm (24 inches) to 800 mm (31 inches), and the depth is expanding from 1000 mm (39 inches) to 1400 mm (55 inches). For cabinets that are cooled by direct-to-chip liquid cooling, a cabinet with extra depth is especially recommended due to the space required for the manifolds.

Choosing an AI-ready cabinet that is taller, deeper, and wider provides the flexibility needed to accommodate evolving hardware requirements, without needing to replace the cabinet infrastructure or add additional cabinets.

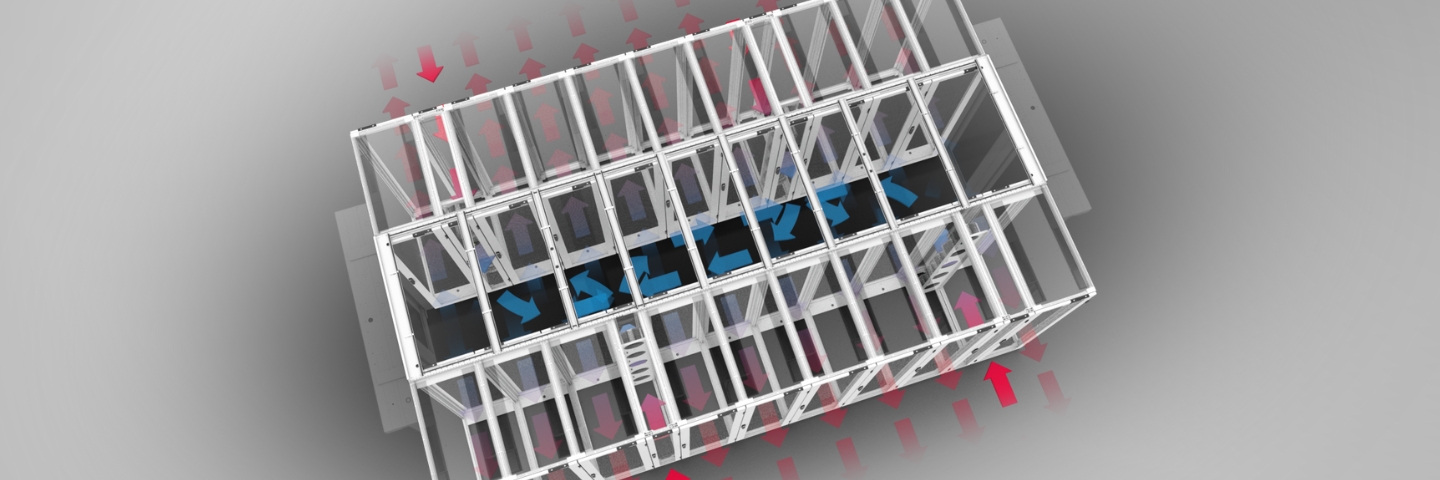

Heat and Airflow Optimization

As the power density of AI computing increases, so does the amount of heat generated, with AI racks consuming 100 kW per cabinet or more, which is over ten times the power draw of conventional server racks. The increased number of cables required to support AI workloads can also impede cooling by blocking airflow paths, preventing heat from dissipating properly.

AI cabinets designed to optimize airflow can significantly reduce the power required to cool AI infrastructure. Engineers should look for cabinets with airflow management features such as blanking panels, sealed cable-entry points, floor skirts, and sealing kits, which are designed to reduce airflow waste. Additionally, the cabinets should be built with the flexibility to integrate with advanced cooling systems, such as rear door heat exchangers or liquid cooling manifolds.

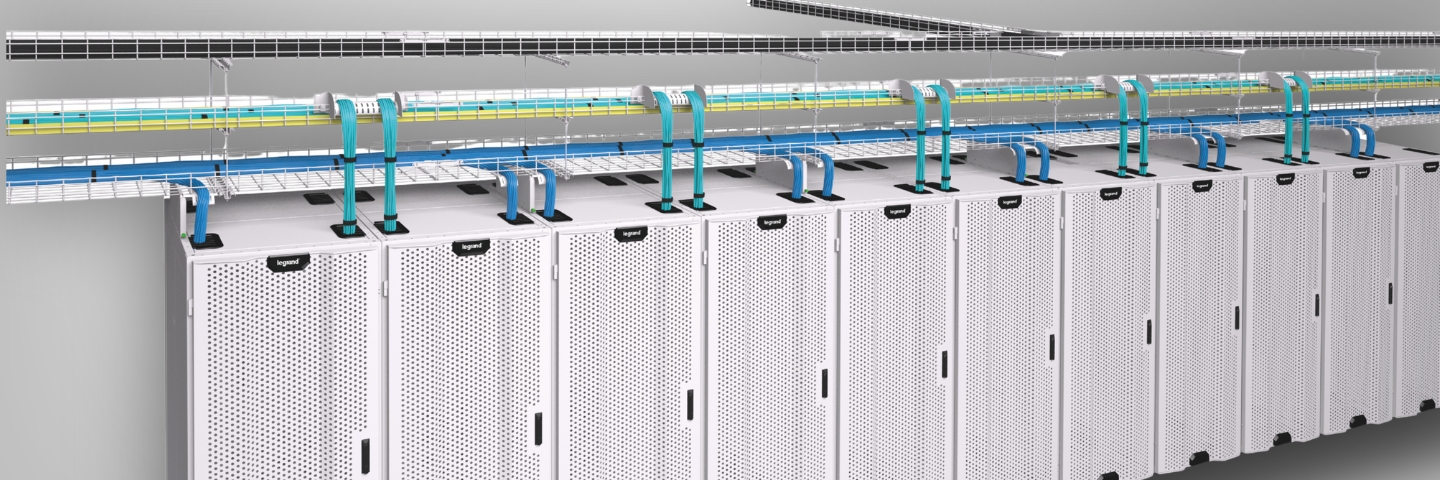

Cable Management

The high-speed inter-server connectivity required for AI clusters can create a complex web of cables. Without proper management, cables can obstruct airflow, cause hotspots, complicate maintenance, and increase the risk of downtime.

Look for cabinets with built-in vertical and horizontal cable management options, such as cable rings, grommets, and tie mounts. These features can reduce network congestion and simplify cable maintenance and organization.

Hardware, Cooling, and Power Distribution Integration

AI computing requirements differ fundamentally from traditional workloads, meaning that AI cabinets need to be able to efficiently house all the necessary components to ensure optimal performance, without increasing their physical footprint.

The number of power distribution units (PDUs) required to supply the necessary power for high-density configurations can increase from one or two PDUs per rack to three, four, or more. Cabinets must also accommodate other key hardware components, such as rear door heat exchangers or direct-to-chip cooling solutions, as well as direct-attached copper cables.

Speed of Deployment

The rapid evolution of AI requires data centers to rapidly deploy hardware to keep pace with the necessary infrastructure to support it. Traditional off-the-shelf cabinets are assembled by data center teams on-site, which can take days or even weeks to populate. In contrast, pre-integrated cabinets accelerate speed to market by streamlining infrastructure deployment and reducing installation time and costs.

Because fully loaded AI racks are significantly heavier than traditional ones, they must be structurally engineered to ensure safe transportation. To meet these demands, rack and stack cabinet solutions should comply with the ISTA 3B standard, which certifies they have been tested to withstand vibration, impact, and compression. Securing the cabinet properly during handling and transit is essential. One effective measure is to use a sturdy wooden transport crate in place of traditional cardboard or plastic packaging, along with specially designed loading ramps.

Watch the webinar now

Canada

Canada

Latin America (English)

Latin America (English)

Latin America (Espanol)

Latin America (Espanol)

USA

USA

China

China

India

India

Japan

Japan

Republic of Korea

Republic of Korea

South East Asia (English)

South East Asia (English)

Austria

Austria

Belgium

Belgium

France

France

Germany

Germany

Italy

Italy

Netherlands

Netherlands

Spain

Spain

Switzerland

Switzerland

Turkey

Turkey

UK

UK

Africa (english)

Africa (english)

Africa (français)

Africa (français)

Middle East (english)

Middle East (english)

Australia

Australia

New Zealand

New Zealand